The Center for High-Throughput Computing at University of Wisconsin has revolutionized the ability to process and analyze large scale data sets for UW researchers, particularly neuroscientists.

Last Friday, as part of the Wisconsin Science Festival, UW faculty members discussed the ways in which neuroscience research has benefited from UW’s innovative high-throughput resources.

The center, established in 2006, allows researchers on campus to perform large scale computing. HTC becomes necessary when a process takes too long to complete on a single server or computer. The center is an open source, meaning that anyone has access to it, and all standard services are free of charge.

Barbara Bendlin is an assistant professor at the Wisconsin Alzheimer’s Disease Research Center. Her research group uses HTC to study cognitive impairment associated with brain aging and Alzheimer’s Disease. Bendlin’s studies involve large multi-modal data sets from hundreds of subjects.

The information contained in one brain scan would take 48 hours to process using a standard computer. However, with HTC software, data from 300 subjects takes only about 14 hours to process.

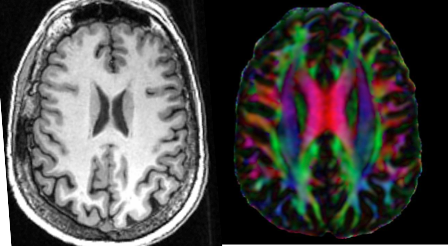

Bendlin’s laboratory uses a brain imaging technique called diffusion tensor imaging, which measures the diffusion of water molecules to map connections between brain regions involved in memory and cognition. By deconstructing water diffusion, researchers can reconstruct bundles of axons that wire up different parts of brain, Bendlin said.

According to Bendlin, individuals who show no signs of cognitive impairment can still exhibit neurobiological symptoms of the disease. These signs include altered diffusion patterns in brain circuits involved in memory.

“People who show more evidence of pathology have greater diffusion,” Bendlin said.

Because brain imaging is non-invasive, it allows doctors and researchers to investigate the structure and activity patterns across healthy and diseased individuals.

Mike Koenigs, associate professor in Psychiatry at UW, said pathophysiological processes underlying various brain disorders can be visualized using scans that measure structure and activity within the brain in real time.

“Pathological levels of certain emotional characteristics derive from abnormal structure and function of the human brain,” Koenigs said.

Koenigs studies the neurobiology of psychopathy. One technique used by his laboratory is called functional magnetic resonance imaging, which measures activity based on blood flow. This allows Koenigs to delineate altered patterns of brain activity in psychopathic versus non-psychopathic criminal offenders.

“Psychopathy is associated with dysfunction in a distributed network of brain regions involved in reward, emotion and decision making,” Koenigs said.

In his research, Koenigs is able to analyze data sets from 100-200 inmates in a matter of days. Software made available by the center allows researchers to analyze millions of images, simulate thousands of events, animate frames and put together movies.

“The computing framework was pioneered on this campus,” Miron Livny, director of the Center for HTC, said. “[We] translated this framework into software that has played an important role in research of [scientists].”

It takes multiple disciplines to model brain networks and their connections. According to Barry Van Veen, professor of Electrical and Computer Engineering, methods such as diffusion tensor imaging and functional magnetic resonance do not permit the most precise temporal resolution. Van Veen models electrical activity in the brain using a signal processing approach.

“[Signal processing is] the art and science of extracting useful information from data,” Van Veen said.

Van Veen said cells in the brain communicate via low electrical currents. These currents sum up to generate electric fields that can be detected as fluctuations in voltage. In measuring electric fields at the scalp surface, researchers can measure neural activity on a millisecond time scale.

“We now know that the way our brain functions is by engaging a number of areas in concert,” Van Veen said.

With many subjects being tested across many experimental conditions over time, recordings of brain activity become more complex and require more computing power. In order to keep up with increasingly complex data and better understand brain function, HTC becomes more necessary.

“Extremely large data sets may be analyzed computationally to reveal patterns, trends and associations, especially relating to human behavior and interactions,” Bendlin said.